Artificial Intelligence and Systemic Financial Risk

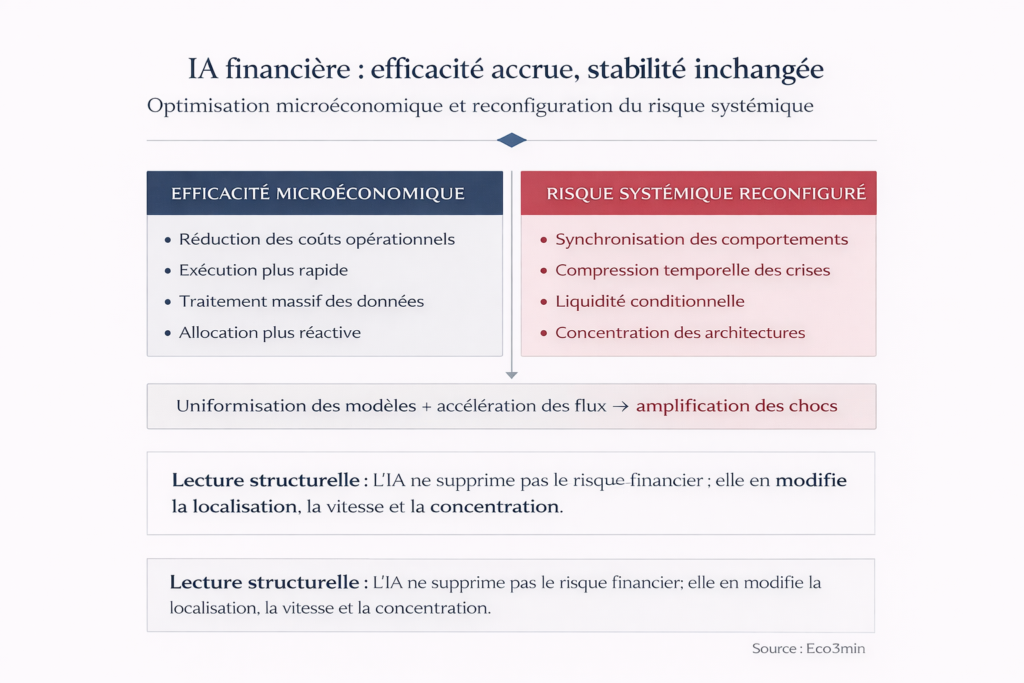

Artificial intelligence is reshaping the architecture of the global financial system. But microeconomic efficiency gains do not automatically translate into better control of systemic risk — they reconfigure it, concentrate it, and accelerate it.

How AI Transforms the Structure of Financial Risk Without Reducing It

AI does not reduce financial risk — it displaces it, concentrates it, and accelerates it.

Artificial intelligence generates substantial efficiency gains at the level of individual financial actors — lower transaction costs, optimized execution, large-scale data processing. But these microeconomic benefits coexist with a structural transformation of systemic risk: behavioral synchronization, time compression of crises, architectural concentration, and conditional liquidity.

The most common analytical mistake is to equate technological sophistication with systemic robustness. For regulators, asset allocators, and risk managers, understanding what AI truly optimizes — and what it shifts or amplifies at the macro-financial level — is a prerequisite for any serious assessment of system stability. This article analyzes the mechanisms through which AI reshapes the map of financial risks and their implications for the current cycle.

- Algorithmic trading — automated order execution based on quantitative signals

- Automated risk management — credit scoring, fraud detection, ML-based VaR models

- High-frequency market making — algorithmic liquidity provision at millisecond scale

- Quantitative allocation — portfolio construction and rebalancing via optimization models

- Financial data and cloud infrastructure — infrastructure providers (cloud, data, models) underpinning the entire value chain

Algorithmic finance is expanding at a sustained pace, driven by measurable optimization promises: by 2025, consolidated estimates indicate automation has reduced operating expenses by 20–30% in the most standardized market segments (McKinsey Global Institute data, FSB reports). But as AI penetrates the core mechanisms of markets and financial institutions, a paradox emerges: what makes each actor individually more efficient can make the system collectively more fragile. The benefits observed at the individual level — better execution, faster processing, finer calibration — transform the organization of flows and capital allocation without disarming the mechanisms that generate systemic risk.

This micro/macro paradox is formalized in the BIS (Annual Report 2024, chapter on AI and financial stability) and Financial Stability Board research (FSB, “Artificial Intelligence and Machine Learning in Financial Services,” 2024), which identify algorithmic behavior synchronization as a first-order emerging risk factor. The analysis fits within the broader framework of financial market functioning mechanisms and interacts with the issue of the disconnect between financial costs and systemic costs.

- AI reduces individual costs by 20–30% but reshapes systemic risk through three channels: synchronization, time compression, concentration

- Liquidity becomes conditional: abundant in normal regimes, prone to evaporate when algorithms converge in the same direction

- Technological refinement does not substitute for macro-financial stabilization mechanisms

AI does not reduce financial risk — it displaces it, concentrates it, and accelerates it. Microeconomic efficiency gains (20–30% reduction in operating costs) coexist with a structural reconfiguration of systemic risk through three channels: behavioral synchronization (shared models → simultaneous repositioning), time compression (shorter but more violent crises), and architectural concentration (dependence on a limited number of infrastructure providers). Liquidity becomes procyclical and conditional — abundant in calm regimes, rationed under stress. This micro-efficiency / macro-fragility paradox is documented by the BIS and FSB; its precise calibration in the current cycle — marked by positive real rates and incomplete monetary normalization — remains open.

The Core Mechanism: How Micro Efficiency Produces Macro Fragility

The transformation of financial risk by AI follows a causal chain whose central mechanism is the composition paradox: what is optimal at the individual level becomes destabilizing at the system level when all actors simultaneously adopt the same tools and logic.

Widespread adoption of similar models → Synchronization of signals and repositioning → Time compression of adjustments → Conditional liquidity (abundant in calm, rationed in stress) → Nonlinear shock amplification

Risk does not disappear — it becomes time-concentrated and synchronized across actors.

Trigger: homogenization of models and architectures. The starting point is not AI as a technology, but its generalization across the financial system. When most actors — investment banks, quant funds, insurers, market makers — rely on similar model architectures, trained on comparable datasets and optimized for converging objective functions, individual decisions tend to align. The FSB (2024) describes this phenomenon as “model monoculture”: the apparent diversity of actors masks growing homogeneity in algorithmic reasoning. A BIS working paper (Carstens, 2024, “AI and the macro-financial landscape”) formalizes the mechanism by showing that model convergence reduces diversity of expectations — a factor that financial theory considers essential for market stability through the offsetting of individual errors.

Transmission channel: synchronization of repositioning. When models converge, repositioning signals converge as well. A single data release (employment, inflation, central bank decision) triggers similar reactions across hundreds of algorithms within milliseconds — buying or selling in the same direction, on the same instruments, at the same time. This synchronization mechanically amplifies the price impact of each new data point: intraday volatility rises not because information is more “shocking,” but because reactions are more uniform. Market data show that average reaction time to macro releases (NFP, CPI, Fed decisions) has fallen from several minutes in 2015 to a few seconds in 2025 — a compression that narrows the window for response diversity and amplifies the first reaction wave.

Amplifier: time compression and conditional liquidity. AI’s most structuring effect on finance is the compression of decision times — and by extension, crisis timelines. Stress episodes unfold in hours rather than days; corrections occur within sessions rather than weeks. This acceleration transforms the very nature of liquidity: it becomes procyclical and conditional. In calm periods, market-making algorithms provide abundant liquidity (tight spreads, deep order books). In stress periods — when signals converge in the same direction and volatility crosses predefined thresholds — those same algorithms reduce exposure, widen spreads, or temporarily withdraw, creating liquidity droughts precisely when liquidity is most needed. This mechanism aligns with the dynamics of conditional liquidity during fast shocks amplified by automation and connects with the analysis of market time compression. The IMF (Global Financial Stability Report, October 2025) notes that flash crashes and mini liquidity dislocations have tripled between 2019 and 2025 — consistent with the widespread adoption of algorithmic architectures.

Consequence: nonlinear shock amplification. The combination of synchronization and time compression produces stress episodes whose magnitude exceeds what underlying fundamentals would justify. A modest shock — a slightly off-consensus macro print, a monetary-policy surprise — can trigger a cascade of algorithmic repositioning whose cumulative effect amplifies volatility far beyond the initial signal. Threshold effects are particularly pronounced: as long as volatility remains below certain levels (VIX < 20, for example), liquidity algorithms maintain activity. Once thresholds are breached, withdrawal is simultaneous and abrupt — a “liquidity cliff” behavior documented by the BIS (Quarterly Review, March 2024) and visible in order-book depth data during VIX > 30 sessions in 2024–2025.

- Efficiency gains: 20–30% reduction in operating expenses in standardized segments. Source: McKinsey Global Institute, FSB (2024).

- Time compression: average reaction time to macro releases reduced from minutes (2015) to seconds (2025). Source: market data, BIS.

- Flash crashes: frequency tripled between 2019 and 2025. Source: IMF GFSR, October 2025.

- Order-book depth: down 40–60% during VIX > 30 sessions despite rising volumes. Source: market data.

- Concentration: the top five financial AI infrastructure providers account for ~70% of the market (cloud, models, data). Source: FSB estimates, 2024.

Growing model homogenization + compressed reaction times + conditional liquidity + infrastructure concentration → systemic risk does not decline with AI adoption — it is reconfigured toward shorter but more intense stress episodes, with threshold effects that are difficult to anticipate.

What the Consensus Celebrates — and the Systemic Risk It Downplays

The dominant narrative, promoted by fintech firms, major banks, and parts of the regulatory community, rests on a compelling logic: AI, by refining information quality and compressing processing times, mechanically enables more efficient capital allocation and reduces inefficiency pockets. Better-informed markets would therefore be inherently more resilient. This diagnosis is not unfounded — microeconomic efficiency gains are real and documented.

Its limitation lies in an implicit assumption: that informational efficiency equals systemic stability. Finance is not purely an informational problem — it also involves coordination among actors, liquidity management, and time-structure dynamics. A market where all participants receive the same information at the same instant and react the same way is not more stable — it is more fragile, because the diversity-of-response mechanism that absorbs shocks in heterogeneous markets is eroded.

The consensus conflates two fundamentally distinct notions: efficiency (ability to process information) and resilience (ability to absorb shocks). AI improves the former and may degrade the latter. The BIS (Annual Report 2024) frames this distinction by noting that “the technological sophistication of financial markets has progressed faster than the sophistication of their regulation and stabilization mechanisms” — locating risk not in technology itself but in the gap between adoption speed and the adaptation speed of safeguards.

Confusing technological performance with risk reduction. AI improves informational efficiency at the individual level, but the resulting behavioral synchronization amplifies contagion and procyclicality at the system level. Risk does not disappear — it becomes concentrated in time (shorter but more violent crises) and in space (dependence on a limited number of infrastructures). Visible gains (costs, execution) mask latent fragilities (synchronization, conditional liquidity, concentration) that surface only under stress.

| “AI Stabilizes Finance” View | Micro/Macro Paradox View | |

|---|---|---|

| Implicit assumption | Informational efficiency = systemic resilience | Efficiency ≠ resilience — synchronization erodes stabilizing diversity |

| Observed signal | Lower costs, improved execution, liquid markets | More flash crashes, conditional liquidity, infrastructure concentration |

| Nature of risk | Residual and declining with adoption | Reconfigured, time-concentrated and synchronized across actors |

| Stress scenario | AI absorbs shocks faster | AI amplifies and accelerates the first shock wave |

| Key variable | Operating costs, processing speed | Model diversity, order-book depth under stress, infrastructure concentration |

Algorithmic Bias, Infrastructure Concentration, and Procyclicality: The Three Risk Amplifiers

The micro/macro paradox is amplified by three structural factors that reshape the nature and distribution of risk.

Biases displaced, not eliminated. AI is often presented as a way to neutralize human cognitive biases — overconfidence, confirmation bias, loss aversion. In reality, these biases are not removed but transferred: they embed in training-data selection, model architecture, and the objective functions assigned to algorithms. An ECB working paper (2024, “Model Risk in the Age of Machine Learning”) documents this phenomenon, showing that AI-based credit scoring models reproduce — and sometimes amplify — historical biases present in training data. Automated risk-scoring systems project historical regularities and struggle to incorporate regime breaks, particularly those triggered by monetary-policy shifts or exogenous shocks. The shift from human biases to algorithmic biases does not reduce exposure to collective judgment errors — it changes their form and speed of propagation.

Infrastructure concentration and operational risk. The adoption of financial AI is accompanied by increasing concentration of technical infrastructure. The five largest providers of cloud, models, and data account for roughly 70% of the financial AI market (FSB estimates, 2024). This concentration creates a new type of operational risk: a failure, cyberattack, or systemic bias affecting a dominant provider can instantly propagate across the entire financial system. The FSB (2024) labels this risk “third-party concentration risk” and notes that it largely falls outside traditional prudential frameworks, which were designed to regulate individual institutions rather than cross-cutting infrastructure providers. The structural concentration of the financial system driven by AI amplifies this asymmetry.

Procyclicality amplified by automation. Risk management algorithms are, by construction, procyclical: they reduce exposure when volatility rises and increase it when volatility falls — behavior that stabilizes individual portfolios but amplifies market moves at the aggregate level. This phenomenon is not new (2000s VaR models showed similar dynamics), but AI intensifies it through speed and scale of adoption. The BIS (Annual Report 2024) notes that algorithmic procyclicality creates a “stability paradox”: markets appear more stable in normal regimes (low volatility, abundant liquidity) precisely because risk-compression mechanisms accumulate silently — until a threshold is crossed and the reversal becomes more abrupt the longer it was delayed. Governance of automated systems is becoming a central financial stability issue.

Implications for Reading the Current Cycle

For financial stability. In the early-2026 environment, marked by still-positive real rates and incomplete monetary normalization, liquidity-dependent assets and infrastructures remain exposed to abrupt adjustments. AI acts as a regime amplifier: it accelerates adjustments when the cycle turns, magnifies repositioning when expectations shift, and compresses decision windows as stress rises. This dynamic interacts with the lagged effects of restrictive monetary policy and with conditional liquidity during rapid shocks. The macro cycle diagnostic must incorporate this time-compression dimension into risk assessment.

For regulation. The gap between the speed of AI adoption by financial institutions and the pace of regulatory adaptation is itself a risk factor. Existing prudential frameworks (Basel III/IV, MiFID II) were designed to regulate human behavior at human time scales. Automation operates at sub-second horizons, with model interactions regulators cannot observe in real time. The FSB (2024) recommends a supervisory approach focused on model diversity and infrastructure resilience rather than individual conduct compliance — a regulatory paradigm shift whose implementation remains nascent.

For asset allocation. The algorithmic amplification of shocks has direct implications for portfolio risk management. Cross-asset correlations tend to rise during stress (“correlation breakdown”) — an effect intensified by synchronized algorithmic repositioning. Traditional diversification strategies (multi-asset, multi-geography) lose effectiveness in stress regimes dominated by algorithms. A framework based on market expectation dynamics must integrate this growing synchronization dimension.

Invalidation condition. This analytical framework loses relevance if effective diversification of models and architectures reduces synchronization of algorithmic behavior — a possible scenario if regulators impose model-diversity constraints or if innovation produces genuinely differentiated approaches. It would also be invalidated if stabilization mechanisms (enhanced circuit breakers, real-time supervision, lender-of-last-resort liquidity adapted to algorithmic speeds) catch up with adoption velocity. Conversely, further infrastructure concentration, slower regulatory adaptation, or a severe liquidity shock (in a context of positive real rates and incomplete monetary normalization) would amplify the vulnerabilities described.

Algorithmic Fragility Indicators to Monitor

Systemic risk linked to financial AI is not captured by traditional indicators (VaR, prudential ratios) but by market microstructure metrics that reflect liquidity quality and synchronization intensity. Five complementary indicators help detect resilience deterioration before it materializes into stress episodes.

Intraday dispersion of reactions to macro releases (standard deviation of price responses in the first minutes) measures the diversity of algorithmic reactions — a structural decline in dispersion signals rising synchronization. Order book depth under stress (available size at best prices when VIX exceeds 25–30) is the most direct indicator of conditional liquidity — declining depth, even if volumes rise, signals rationing. Cross-asset correlations during high-volatility periods measure repositioning convergence — rising correlations among typically uncorrelated asset classes signal simultaneous algorithmic selling. Market share of the five largest financial AI infrastructure providers (cloud, models, data) measures operational concentration risk — an indicator to monitor in FSB reports and regulatory publications. Frequency of liquidity dislocation episodes (flash crashes, price/NAV gaps in ETFs, quote voids) is the most direct empirical signal of system fragility — its upward trend since 2019 (×3, IMF data) is the clearest warning sign.

Three Time Horizons to Track Risk Transformation

Short horizon (0–6 months): key indicators include the frequency of flash crashes and mini liquidity dislocations, order book depth during high-volatility sessions (VIX > 25), and dispersion of market reactions to macro releases (declining dispersion signals rising synchronization). The short-term risk is a liquidity dislocation episode amplified by algorithmic synchronization, in a context of positive real rates that makes liquidity structurally more conditional.

Cycle horizon (1–3 years): the structural issue is the pace of adaptation of regulatory and stabilization frameworks. If prudential regimes effectively incorporate infrastructure concentration and model diversity (FSB 2024 recommendations), systemic risk can be contained. If regulatory adaptation lags — historically common in financial innovation cycles — risk accumulates silently until the next severe stress test. Interaction with the real economic cycle will determine whether that test occurs in an orderly slowdown or amid more intense financial strain.

Structural horizon (5+ years): AI durably reshapes the architecture of the financial system — concentration of flows, compression of timeframes, automation of price formation. If this transformation continues without proportional adaptation of stabilization mechanisms, the financial system could evolve toward a state of “stable fragility”: prolonged phases of apparent calm (low volatility, abundant liquidity, high efficiency) punctuated by rupture episodes that are more intense and faster than in previous cycles. This scenario — a financial “moderation paradox” — aligns with BIS observations on volatility compression before historical crises. Regular monitoring via the weekly macro update incorporates analysis of liquidity and market volatility conditions.

AI does not reduce financial risk — it redistributes, concentrates, and accelerates it. Microeconomic efficiency gains are real and documented, but they coexist with a structural transformation of systemic risk: synchronized behavior (shared models), time-compressed crises (hours instead of days), conditional liquidity (abundant in calm, rationed in stress), and infrastructure concentration (dependence on a few dominant providers). The consensus conflates informational efficiency with systemic resilience — a distinction that becomes critical in a positive real-rate environment where liquidity is structurally more conditional. The primary risk is not a clearly identifiable shock but “stable fragility”: prolonged calm phases punctuated by rupture episodes more intense than fundamentals alone would justify.

Robust: The composition paradox (micro efficiency / macro fragility) is formalized by the BIS and the FSB. Synchronization of algorithmic behavior is documented in market data (reaction time compression, higher stress correlations). The multiplication of flash crashes (×3 between 2019 and 2025) is observable. Concentration of financial AI infrastructure (~70% across 5 providers) is measurable. Procyclicality of risk-management models has been established since the 2000s and is amplified by automation.

Uncertain: The stress threshold at which synchronization triggers systemic dislocation (rather than localized flash crashes) has not yet been reached in the current cycle. Regulators’ capacity to adapt stabilization mechanisms to algorithmic speed is still being assessed. The impact of potential model diversification (if innovation produces genuinely differentiated approaches) on synchronization reduction remains hypothetical. The possibility of a “moderation paradox” (prolonged calm followed by abrupt rupture) is plausible but not predictable in timing.

Assessing AI’s impact on finance through the micro/macro paradox — rather than solely through efficiency gains — provides a more comprehensive analytical framework to evaluate how the structure of financial risk is evolving and to anticipate systemic vulnerability points in the current cycle.

- AI does not reduce financial risk — it redistributes, concentrates, and accelerates it. Microeconomic efficiency gains (20–30% cost reduction) coexist with a structural reconfiguration of systemic risk.

- The central mechanism is the composition paradox: model convergence erodes response diversity, turning modest shocks into episodes of nonlinear amplification.

- Liquidity becomes procyclical and conditional — abundant in calm regimes, rationed in stress — a dynamic amplified by time-compressed algorithmic reactions.

- Concentration of financial AI infrastructure (~70% across 5 providers) creates cross-system operational risk not captured by existing prudential frameworks.

- This framework is invalidated if effective model diversification reduces synchronization, or if stabilization mechanisms catch up with adoption speed — conditions not currently met.

Frequently Asked Questions on AI and Financial Stability

Does artificial intelligence make financial markets more stable?

AI improves each participant’s informational efficiency (faster processing, lower costs, optimized execution), but it does not automatically strengthen system-wide stability. Synchronization of algorithmic behavior — driven by model homogenization — reduces diversity of responses to shocks, amplifying stress episodes. The BIS and the FSB (2024) explicitly distinguish individual efficiency from systemic resilience.

Why can automation amplify financial crises?

When most participants rely on similar models, repositioning signals converge. The same shock triggers identical reactions across hundreds of algorithms within milliseconds. This synchronization compresses crisis timelines (hours instead of days) and turns liquidity into a conditional variable: abundant in calm periods, rationed in stress. The mechanism resembles an emergency exit — sufficient under normal conditions, insufficient when everyone rushes out at once.

What is the link between artificial intelligence and flash crashes?

Flash crashes have tripled between 2019 and 2025 (IMF data). AI contributes through reaction-time compression (milliseconds) and simultaneous withdrawal of liquidity providers when volatility crosses predefined thresholds. A flash crash is not a malfunction — it is the normal manifestation of conditional liquidity in an algorithm-dominated system.

How is financial AI regulated?

Existing prudential frameworks (Basel III/IV, MiFID II) were designed for human behavior at human time scales. Automation operates in milliseconds, with interactions regulators cannot observe in real time. The FSB (2024) recommends shifting from supervision centered on individual compliance to supervision centered on model diversity and infrastructure resilience — a transition that remains in its early stages.

Mis à jour : 20 March 2026

This article provides economic and financial analysis for informational purposes only. It does not constitute investment advice or a personalized recommendation. Any investment decision remains the sole responsibility of the reader.